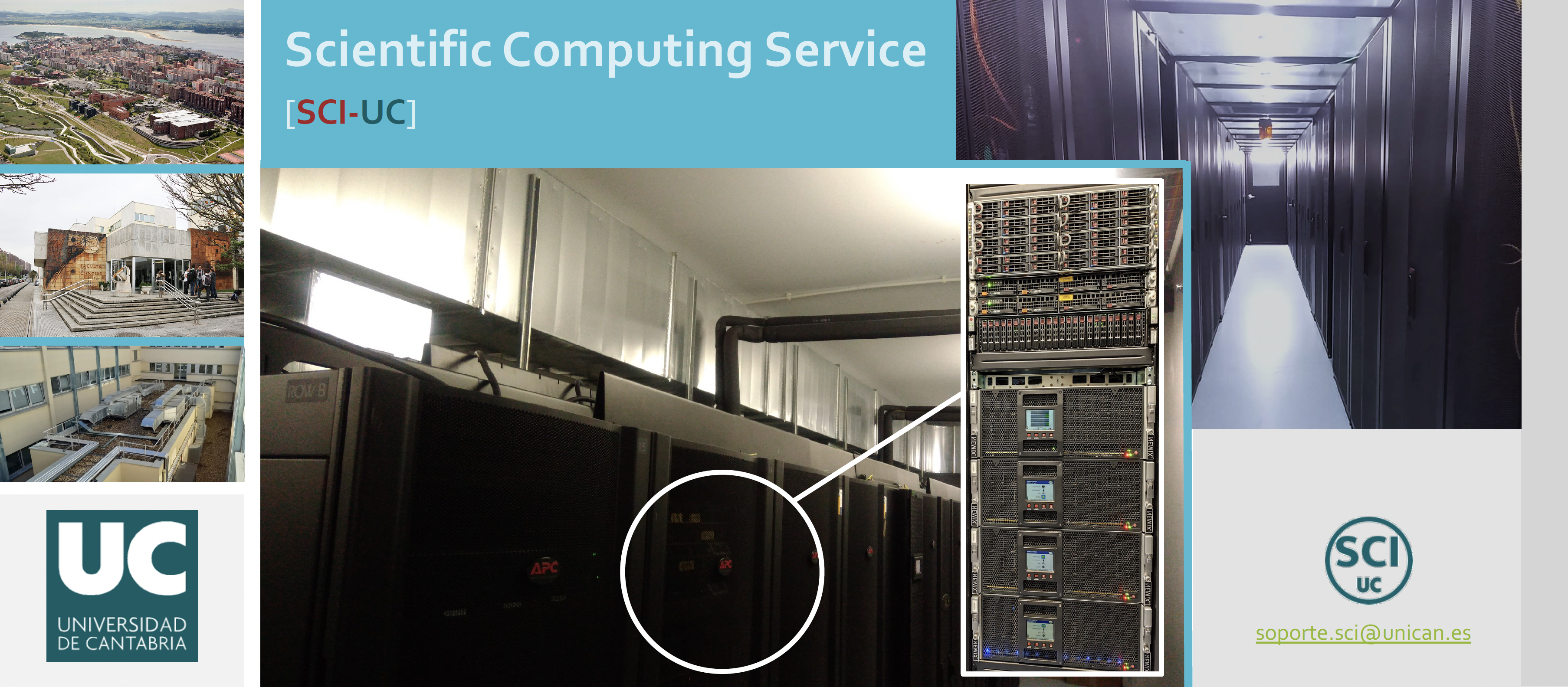

Scientific Computing Service

Servicio de Computación AVANZADA para la Investigación SCI-UC

Universidad de Cantabria

Here you will find all the information related to our resources and services.

Quick Start

To get started quickly, visit Resource Access Guide and the Slurm User guide.

If you have any questions, feel free to contact us at soporte.sci@unican.es.

SCI-UC

The Scientific Computing Service at the University of Cantabria SCI-UC is a specialized unit that provides technical support and advanced computing services to the university's research groups, organizations, and companies involved in research and innovation. Our main mission is to facilitate access to and efficient use of high-performance computing (HPC) infrastructures to accelerate scientific and technological advances.

What We Do?

We offer a wide range of services, including:

-

Equipment housing and infrastructure hosting: We provide secure housing for research computing equipment within our 3Mares facilitiy (Data Center), including rack space, power supply, cooling, network connectivity, and monitoring, ensuring that externally acquired systems operate in a professionally managed and highly available environment.

-

HPC system installation and configuration: We design, deploy, and optimize high-performance computing (HPC) environments tailored to the specific requirements of research projects, ensuring efficient integration with existing infrastructure and workflows.

-

Maintenance and technical support: We provide continuous monitoring, maintenance, and periodic upgrades of the computing infrastructure to guarantee reliability, optimal performance, and minimal service disruption.

-

Specialized consulting: We collaborate closely with research groups to deliver tailored technical solutions, advise on best practices for efficient resource utilization, and help researchers fully exploit advanced computing capabilities.

-

Equipment procurement consulting: We offer expert guidance on the acquisition of hardware and software, helping research groups select technologies that best match the performance, scalability, and budgetary requirements of their projects.

Involved Research Groups

-

Department of Earth Sciences and Condensed Matter Physics (CITIMAC)

-

Department of Applied Mathematics and Computational Sciences

-

Department of Geography, Urban Planning and Spatial Planning

-

Department of Ground and Materials Science and Engineering (DCITYM)

-

Other Entities

Infrastructure overview

3Mares Data Center

80 m2 divided into 2 work areas:

- Assembly area (equipment assembly and repair)

- Operation area ("cold" room): CUBO AP (Schneider Electric)

- 23 racks (IT)

- 4 Cooling racks (InROWs) & Free Cooling System

- Up 120Kw of cooling power (70 free cooling)

- 3 Power racks (UPS + PDUs)

- Up 320KW of electrical power

Stack hardware

Advanced Computing Servers:

Advanced computing clusters of the research groups (Housing) and the center/service (Hosting):

- > 90 compute working nodes

- > 4600 cores and 15 TB (RAM)

- 2 GPU working nodes

- 2 Nvidia Quatro RTX 4000 GPUs

Networking:

- 1/10Gbps Ethernet Network

- 200Gbps HPC Infiniband Network for computation and data storage

Storage:

- Lustre FS: +1.2PB (Hight performace remote store)

- NFS FS: +80TB (Home remote store)

- Local scratch (SSD/SATA): +100TB

- NAS servers: 2 units with 46+64TB (Backups)

Stack Base software

- All working nodes are running Rocky Linux 8.10

Stack HPC software

Objective:

- Hide hardware complexity from the end user

- Facilitate the usability of the overall system

- Simplify management

Our main HPC management platforms:

-

Slurm v14.11.3

-

Spack v0.23.0 && Module Environment (Lua) v8.7.65

- gcc-13.3.0/gcc-8.5.0-rt6fd

- intel-oneapi-compilers-2024.2.0/gcc-8.5.0-st4zi

- intel-oneapi-mkl-2024.2.0/gcc-8.5.0-q44bf

- intel-oneapi-mpi-2021.14.0/gcc-8.5.0-iywn6

- openblas-0.3.28/gcc-8.5.0-qmqz7

- openmpi-5.0.5/gcc-8.5.0-cuim6

- openmpi-5.0.5/gcc-8.5.0-slurm-ogwvq

- hdf5-1.14.5/gcc-8.5.0-q24s5

System Management Properties:

- Fully custom-developed: learning and optimization

- Exclusively based on open-source software

- Server virtualization

- Continuous process of evolution and improvement

- 99% of the software is free

Our main infrastructure management platforms:

- XCP-ng & Xen Orchesta (XOA)

- Container infrastructure (Docker)

- Rocky Linux 8.10 & 9.6

- Slurm job manager

- Ganglia monitor & Nagios core

Contact: soporte.sci@unican.es